Last updated 5 months ago

Zero-Knowledge Data Validator (Reference DApp)

Problem

Buyers cannot verify dataset quality (schema, bias, nulls) without seeing it, while sellers risk theft if they reveal raw data. This trust gap halts the exchange of AI training data.

Solution

A Reference DApp where sellers use Midnight ZKPs to mathematically prove dataset quality without revealing raw content. It iterates through private file to verify, publishing only the result.

About this idea

[Proposal setup] Proposal title

Please provide your proposal title

Zero-Knowledge Data Validator (Reference DApp)

[Proposal Summary] Time

Please specify how many months you expect your project to last

3

[Proposal Summary] Translation Information

Please indicate if your proposal has been auto-translated

No

Original Language

en

[Proposal Summary] Problem Statement

What is the problem you want to solve?

Buyers cannot verify dataset quality (schema, bias, nulls) without seeing it, while sellers risk theft if they reveal raw data. This trust gap halts the exchange of AI training data.

[Proposal Summary] Project Dependencies

Does your project have any dependencies on other organizations, technical or otherwise?

No

Describe any dependencies or write 'No dependencies'

No dependencies

[Proposal Summary] Project Open Source

Will your project's outputs be fully open source?

Yes

Please provide here more information on the open source status of your project outputs

Apache 2.0. Fully open source.

[Theme Selection] Theme

Please choose the most relevant theme and tag related to the outcomes of your proposal

Security

[Campaign Category] Category Questions

What is useful about your DApp within one of the specified industry or enterprise verticals?

This project directly targets the AI space by solving the "Trust Paradox" in AI data training needs. Currently, data scientists and AI Labs face a difficult choice when buying training data, either buy it "blind" and risk getting low-quality garbage, or ask for a sample and risk the seller refusing due to piracy concerns.

Our DApp solves this by letting the seller prove the data is clean and balanced, for example, proving it has zero empty rows or an equal distribution of categories, without revealing a single line of the actual content.

This "Verify-First" model unlocks a secure supply chain for AI data. Also, this project is an important educational tool. It teaches developers the fundamental pattern of "Private Validation."

By showing how to loop through a private list and check for errors, we provide a reusable template that can be adapted for supply chain, financial audits, or any other industry that relies on verifying tabular data.

What exactly will you build? List the Compact contract(s) and key functions/proofs, the demo UI flow, Lace (Midnight) wallet integration, and your basic test plan.

We will build a functional Reference DApp called the Zero-Knowledge Data Validator that allows users to prove the quality of a CSV dataset while keeping the data itself completely private.

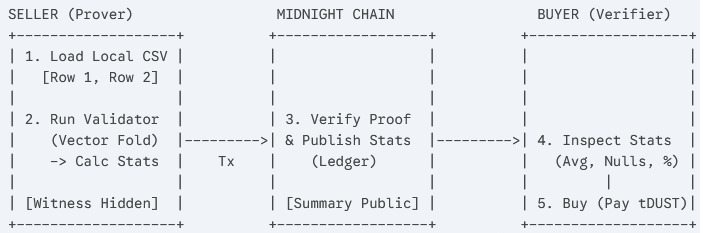

The core component is a Compact smart contract that ingests a private file as a witness and runs three specific validation circuits. The first is a schema check to ensure the file structure is correct. The second is a quality check that loops through every row to prove there are no null values or errors. The third is a statistics check that calculates and reveals aggregate data, such as the average value or class distribution, to prove the dataset is unbiased.

The application will feature a simple web interface integrated with the Lace wallet. A seller can load a file from their computer, letting the browser generate a zero-knowledge proof locally, and then publish the verified metadata to the Midnight testnet. A buyer can then view these "Verified" listings and pay to access the data, with the UI simulating the final key release.

Our testing plan is designed to prove that the validation logic is watertight. We will create automated tests that attempt to submit broken or malicious datasets to ensure the contract correctly rejects them, and we will verify that the raw data inputs remain invisible to the network throughout the entire process

How will other developers learn from and reuse your repo? Describe repo structure, README contents, docs/tutorials, test instructions, and extension points. Which developer personas benefit, and how will you gauge impact (forks, stars, issues, remixes)?

Our repository will be structured as a standard educational resource, prioritizing clarity and simplicity over complexity. The root directory will contain the contract folder holding the DataValidator.compact source code, a ui folder for the React-based marketplace interface, and a scripts folder containing the TypeScript test harnesses.

The core of our documentation will be a "Private Validation" guide. Unlike standard tutorials that show simple value transfers, this guide will teach developers the specific pattern of "Vector Folding", how to loop through a private dataset to verify its structure and statistics without revealing the data itself. We will provide line-by-line comments explaining how to parse a witness (the private CSV file) and how to write custom logic for different data types.

This project is specifically designed for Data Engineers and AI Developers entering the Web3 space who need to understand how to handle large arrays of private information. We will design the code with clear extension points where a developer can swap our "Bias Check" logic for their own "Outlier Detection" or "variance calculation" with just a few lines of code.

We will measure the success of this project by the number of GitHub forks and specifically by "Remixes", tracking how many developers adapt our validator pattern to verify other types of assets like supply chain manifests or financial audit logs.

[Your Project and Solution] Solution

Please describe your proposed solution and how it addresses the problem

We propose a Reference DApp that acts as a "Zero-Knowledge Quality Control" for the AI training data. Currently, data exchange is stuck in a "trust paradox" where buyers cannot verify the quality of a dataset (such as its schema, cleanliness, or class balance) without seeing it, and sellers cannot reveal the data without risking piracy. Our solution breaks this deadlock by moving the verification process to the seller's local machine using Midnight’s privacy-preserving smart contracts.

The core of our solution is a "Validator Circuit" that accepts a private file and runs a series of sanity checks, such as ensuring there are no empty rows and that the data distribution is balanced, before generating a cryptographic proof of these facts. This proof is published to the ledger alongside a cryptographic hash of the file, giving the buyer a mathematical guarantee that the data is high-quality and tamper-proof before they pay.

By solving the specific problem of "blind" data purchasing, we provide a reusable infrastructure pattern that helps the Midnight ecosystem expand into the high-growth vertical of decentralized AI and secure data monetization.

[Your Project and Solution] Impact

Please define the positive impact your project will have on Midnight ecosystem

This project proves that Midnight is ready for real-world business use cases more than simple value transfers. By building a tool for the AI training data market, we show capability that transparent blockchains like Ethereum or others cannot offer. The ability to verify the quality of a dataset without ever revealing the data itself. This solves a major trust issue in the data supply chain and invites a new demographic of data scientists and engineers to explore what they can build on Midnight.

Beyond the AI use case, the biggest long-term impact of this project is educational. We know that starting with Zero-Knowledge development is difficult, and most developers learn best by copying working examples. We are providing a clear, open-source template for "Private Validation", the logic of looping through a private file to check for metadata statistic

A developer building a financial audit tool or a supply chain tracker can simply look at our code, copy the logic, and adapt it to their own industry. By giving builders a working pattern to start from, we reduce the frustration of learning a new language and help the developer ecosystem grow faster.

[Your Project and Solution] Capabilities & Feasibility

What is your capability to deliver your project with high levels of trust and accountability? How do you intend to validate if your approach is feasible?

Our team, operating as Bamboo Labs, has a history of successfully delivering decentralized grants. We recently completed the Sign Language Translator AI (SLTA) project for Deep Funding (SingularityNet), where we were awarded 50,000 USD after ranking 3rd out of 91 submissions. We successfully delivered ahead of schedule and exceeded all technical milestones. You can verify our previous successful proposal and project status at https://deepfunding.ai/proposal/sign-language-translator-ai-slta/ (note that while we have completed all milestones, the public site update may be pending).

Regarding feasibility, Bamboo Labs is composed of seasoned software engineers who have already extensively explored the Midnight architecture. We have validated the core primitives required for this project, specifically the Compact language's capability to handle vector operations. The community can further validate our technical credibility by reviewing our GitHub repositories and professional profiles.

[Final Pitch] Budget & Costs

Please provide a cost breakdown of the proposed work and resources

We propose a total budget of 10,000 USDM, allocated as 4,000 USDM for Milestone 1 (smart contract development), 4,000 USDM for Milestone 2 (UI and private witness integration), and 2,000 USDM for Milestone 3 (documentation, tutorials, and testing). The funds will be used to compensate the two Bamboo Labs engineers for their technical development hours. Regular updates on project progress, milestone completions, and demos will be shared through Bamboo Labs’ social channels to maintain transparency and Midnight community engagement.

[Final Pitch] Value for Money

How does the cost of the project represent value for the Midnight ecosystem?

At a total cost of 10,000 USD, this project represents exceptional value because it functions as a permanent educational resource rather than just a single application. We are building a public utility that hundreds of future developers can use for free. If our code saves just twenty developers a week of frustration in figuring out how to validate private lists, the grant has already paid for itself in saved engineering time. We are providing a reusable pattern that can be adapted not just for AI, but for financial audits, supply chain manifests, and identity verification, giving massive return on investment for the ecosystem.

Additionally, this investment is efficient because it is backed by a team with a proven track record. Having already delivered a 50,000 USD grant ahead of schedule for the SingularityNet ecosystem, we bring a level of reliability that minimizes risk for the Midnight Foundation.

[Self-Assessment] Self-Assessment Checklist

I confirm that the proposal clearly provides a basic prototype reference application for one of the areas of interest.

Yes

I confirm that the proposal clearly defines which part of the developer journey it improves and how it makes building on Midnight easier and more productive.

Yes

I confirm that the proposal explicitly states the chosen permissive open-source license (e.g., MIT, Apache 2.0) and commits to a public code repository.

Yes

I confirm that the team provides evidence of their technical ability and experience in creating developer tools or high-quality technical content (e.g., GitHub, portfolio).

Yes

I confirm that a plan for creating and maintaining clear, comprehensive documentation is a core part of the proposal's scope.

Yes

I confirm that the budget and timeline (3 months) are realistic for delivering the proposed tool or resource.

Yes

[Required Acknowledgements] Consent & Confirmation

I Agree

Yes

Team

Bamboo Labs consists of two core contributors for this project:

- Fitra Rahmani Khasyah - Software Engineer & Blockchain Developer

LinkedIn: https://www.linkedin.com/in/fitra-rahmani

GitHub: https://github.com/khasyah-fr

With 3 years of full-stack and Web3 development experience, Khasyah will lead the Compact smart contract implementation and front-end integration.

- Khresna Pandu Izzaturrahman - Research & AI Engineer

LinkedIn: https://www.linkedin.com/in/khresna-pandu/

GitHub: https://github.com/KhresnaPanduI

With 3 years of experience in research and AI engineering, Pandu will help develop the Compact smart contract and produce clear, developer-focused documentation and tutorials.